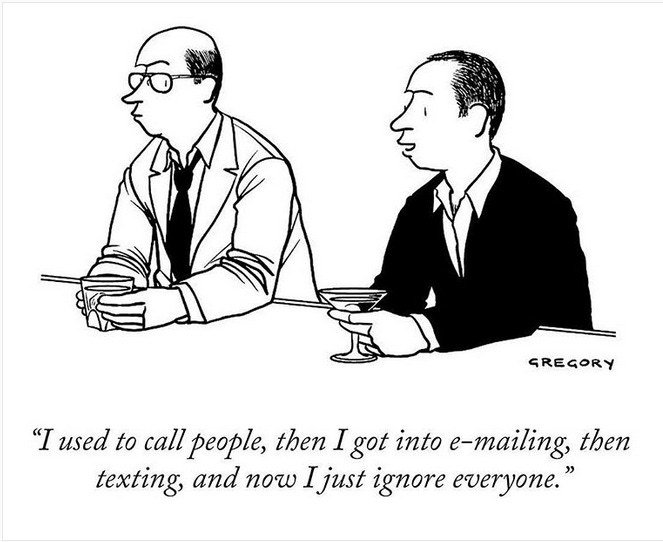

Welcome to part two of my special on AI and humour. This is a little overview of the field: not too techy — funny videos and cartoons below — and hopefully a good general introduction.

Just to set the parameters: I normally work with AI when working on developing conversational AI projects: most recently, I did this for an international bank. This invol…

Keep reading with a 7-day free trial

Subscribe to Brands & Humour to keep reading this post and get 7 days of free access to the full post archives.